Due to the data now being transferred from the Temp DB into your working database, this once again meant that the performance was really bad. So you are not really getting the benefit of having your data stored in memory.Ĥ. When using the Maximum Insert Commit size of zero and there is not enough memory on your server, SSIS then stores this data in the TempDB. I ran into an issue when it then had to complete the commit, which lead me to the following findings listed below.ģ. I recently was doing a large insert of data that was roughly 640 million rows, using the above method to get the data down as quickly as possible.Ģ.

#Sql server native client 10 faster oledb update

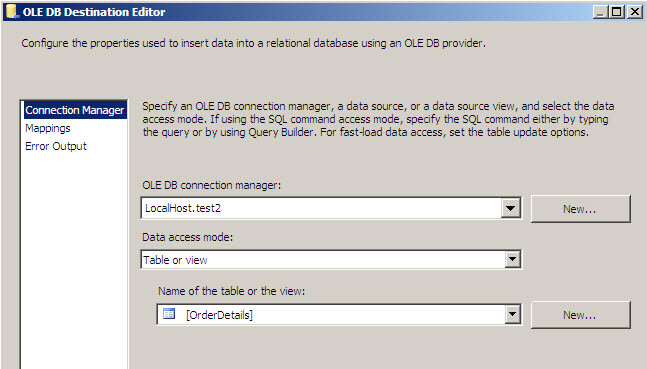

Update – Potential issue with the Maximum Insert Commit size (25 July 2013)ġ. If this happens to you, then configure the Maximum insert commit size, to accommodate your memory allocation. If you do NOT have enough memory, when SSIS tries to commit the transaction, it will then fail. You must ensure that you have enough memory in order to only commit the entire transaction once. NOTE : that the Maximum insert commit size is set to zero. Then ensure that you have configured with the following as shown below:Ĭ. Double click or right click on the OLE DB Destination and go into the Properties.ī. The final part is to configure the OLE DB Destination in the data flow task.Ī.

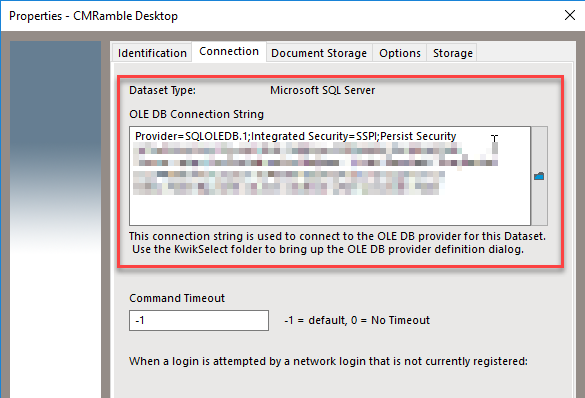

NOTE : This sometimes makes the query faster, and other times it can also slow the query down. Now what sometimes makes the source query faster is using the TSQL Hint: Option (Fast 10000)Ī. Next for the OLE DB Destination you will follow all the steps in Step 1, but this will just change to your destination Server.ģ. NOTE : It must be the above number, if you put it any higher the connection WILL failĢ. Scroll down near the bottom where it says Packet SizeĢ. Next click on All in the left hand column.ĭ. Then put in your Server Name and Database so that it will be shown as below:Ĭ. Where it says provider, from the drop down select:ī. Create your new OLE DB Source Connection, and then once it is open ensure that the following is selected: The first part is to configure your OLE DB Source Connection.Ī. Below is a chart to show how significant the difference is:Ĭonfiguration of OLE DB Data Flow Source and Destinations for maximum throughput over a network.īelow are the configuration settings for the final row in the table above.ġ.So when moving large amounts of data, this would mean that data transfers can go from hours to minutes.It was interesting to note, that the network card throughput was almost 10 x faster when changing from the ADO NET to OLE DB.The difference between the ADO NET source and ADO NET Destinations when compared to the OLE DB Source and OLE DB Destinations is significant.Set Packet Size on OLE DB Connection Manager to: 32767Ĭonfigured the OLE DB Source Connection Manager to use: Microsoft OLE DB Provider for SQL Server Set the Commit Size for the OLE DB Destination to zero “0”Ĭonfigured the OLE DB Destination Connection Manager to use: SQL Server Native Client 11.0 In TSQL Query used the Hint “Option (10000)”Ĭonfigured the OLE DB Source Connection Manager to use: SQL Server Native Client 11.0 Set Packet Size on ADO Connection Manager to: 32768 Both the Source and Destination tables had compression enabled on the physical tables.ĭata flow Destination Configuration Settings.At the time, there was nothing else running on the servers, they were both idle.

#Sql server native client 10 faster oledb how to

And then finally I have only detailed, based on the fastest data flow items how to configure the data flow source and destinations in order to get the maximum throughput.

Below is what the outcome of the tests are.My goal was to find out which combination of data flow Source and Destinations would enable me to transfer data over a network as quickly and as efficiently as possible.